Difference between revisions of "Chapter 15: Engineering Foundations"

(→Measurement) |

(→Levels (Scales) of Measurement) |

||

| Line 512: | Line 512: | ||

define measures from an operational perspective. | define measures from an operational perspective. | ||

| − | ==Levels (Scales) of Measurement== | + | ===Levels (Scales) of Measurement=== |

{{RightSection| {{referenceLink|number=4|parts=c3s2}} {{referenceLink|number=6|parts=c7s5}}}} | {{RightSection| {{referenceLink|number=4|parts=c3s2}} {{referenceLink|number=6|parts=c7s5}}}} | ||

Revision as of 05:25, 29 August 2015

Contents

- CAD

- Computer-Aided Design

- CMMI

- Capability Maturity Model Integration

- Probability Density Function

- pmf

- Probability Mass Function

- RCA

- Root Cause Analysis

- SDLC

- Software Development Life Cycle

IEEE defines engineering as “the application of a systematic, disciplined, quantifiable approach to structures, machines, products, systems or processes” [1]. This chapter outlines some of the engineering foundational skills and techniques that are useful for a software engineer. The focus is on topics that support other KAs while minimizing duplication of subjects covered elsewhere in this document.

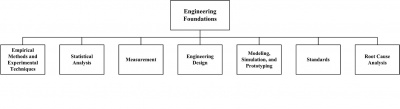

As the theory and practice of software engineering matures, it is increasingly apparent that software engineering is an engineering discipline that is based on knowledge and skills common to all engineering disciplines. This Engineering Foundations knowledge area (KA) is concerned with the engineering foundations that apply to software engineering and other engineering disciplines. Topics in this KA include empirical methods and experimental techniques; statistical analysis; measurement; engineering design; modeling, prototyping, and simulation; standards; and root cause analysis. Application of this knowledge, as appropriate, will allow software engineers to develop and maintain software more efficiently and effectively. Completing their engineering work efficiently and effectively is a goal of all engineers in all engineering disciplines.

The breakdown of topics for the Engineering Foundations KA is shown in Figure 15.1.

1 Empirical Methods and Experimental Techniques

An engineering method for problem solving involves proposing solutions or models of solutions and then conducting experiments or tests to study the proposed solutions or models. Thus, engineers must understand how to create an experiment and then analyze the results of the experiment in order to evaluate the proposed solution. Empirical methods and experimental techniques help the engineer to describe and understand variability in their observations, to identify the sources of variability, and to make decisions.

Three different types of empirical studies commonly used in engineering efforts are designed experiments, observational studies, and retrospective studies. Brief descriptions of the commonly used methods are given below.

1.1 Designed Experiment

A designed or controlled experiment is an investigation of a testable hypothesis where one or more independent variables are manipulated to measure their effect on one or more dependent variables. A precondition for conducting an experiment is the existence of a clear hypothesis. It is important for an engineer to understand how to formulate clear hypotheses.

Designed experiments allow engineers to determine in precise terms how the variables are related and, specifically, whether a cause-effect relationship exists between them. Each combination of values of the independent variables is a treatment. The simplest experiments have just two treatments representing two levels of a single independent variable (e.g., using a tool vs. not using a tool). More complex experimental designs arise when more than two levels, more than one independent variable, or any dependent variables are used.

1.2 Observational Study

An observational or case study is an empirical inquiry that makes observations of processes or phenomena within a real-life context. While an experiment deliberately ignores context, an observational or case study includes context as part of the observation. A case study is most useful when the focus of the study is on how and why questions, when the behavior of those involved in the study cannot be manipulated, and when contextual conditions are relevant and the boundaries between the phenomena and context are not clear.

1.3 Retrospective Study

A retrospective study involves the analysis of historical data. Retrospective studies are also known as historical studies. This type of study uses data (regarding some phenomenon) that has been archived over time. This archived data is then analyzed in an attempt to find a relationship between variables, to predict future events, or to identify trends. The quality of the analysis results will depend on the quality of the information contained in the archived data. Historical data may be incomplete, inconsistently measured, or incorrect.

2 Statistical Analysis

In order to carry out their responsibilities, engineers must understand how different product and process characteristics vary. Engineers often come across situations where the relationship between different variables needs to be studied. An important point to note is that most of the studies are carried out on the basis of samples and so the observed results need to be understood with respect to the full population. Engineers must, therefore, develop an adequate understanding of statistical techniques for collecting reliable data in terms of sampling and analysis to arrive at results that can be generalized. These techniques are discussed below.

2.1 Unit of Analysis (Sampling Units), Population, and Sample

Unit of analysis. While carrying out any empirical study, observations need to be made on chosen units called the units of analysis or sampling units. The unit of analysis must be identified and must be appropriate for the analysis. For example, when a software product company wants to find the perceived usability of a software product, the user or the software function may be the unit of analysis.

Population. The set of all respondents or items (possible sampling units) to be studied forms the population. As an example, consider the case of studying the perceived usability of a software product. In this case, the set of all possible users forms the population.

While defining the population, care must be exercised to understand the study and target population. There are cases when the population studied and the population for which the results are being generalized may be different. For example, when the study population consists of only past observations and generalizations are required for the future, the study population and the target population may not be the same.

Sample. A sample is a subset of the population. The most crucial issue towards the selection of a sample is its representativeness, including size. The samples must be drawn in a manner so as to ensure that the draws are independent, and the rules of drawing the samples must be predefined so that the probability of selecting a particular sampling unit is known beforehand. This method of selecting samples is called probability sampling.

Random variable. In statistical terminology, the process of making observations or measurements on the sampling units being studied is referred to as conducting the experiment. For example, if the experiment is to toss a coin 10 times and then count the number of times the coin lands on heads, each 10 tosses of the coin is a sampling unit and the number of heads for a given sample is the observation or outcome for the experiment. The outcome of an experiment is obtained in terms of real numbers and defines the random variable being studied. Thus, the attribute of the items being measured at the outcome of the experiment represents the random variable being studied; the observation obtained from a particular sampling unit is a particular realization of the random variable. In the example of the coin toss, the random variable is the number of heads observed for each experiment. In statistical studies, attempts are made to understand population characteristics on the basis of samples.

The set of possible values of a random variable may be finite or infinite but countable (e.g., the set of all integers or the set of all odd numbers). In such a case, the random variable is called a discrete random variable. In other cases, the random variable under consideration may take values on a continuous scale and is called a continuous random variable.

Event. A subset of possible values of a random variable is called an event. Suppose X denotes some random variable; then, for example, we may define different events such as X ³ x or X < x and so on.

Distribution of a random variable. The range and pattern of variation of a random variable is given by its distribution. When the distribution of a random variable is known, it is possible to compute the chance of any event. Some distributions are found to occur commonly and are used to model many random variables occurring in practice in the context of engineering. A few of the more commonly occurring distributions are given below.

- Binomial distribution: used to model random variables that count the number of successes in n trials carried out independently of each other, where each trial results in success or failure. We make an assumption that the chance of obtaining a success remains constant [2*, c3s6].

- Poisson distribution: used to model the count of occurrence of some event over time or space [2*, c3s9].

- Normal distribution: used to model continuous random variables or discrete random variables by taking a very large number of values [2*, c4s6].

Concept of parameters. A statistical distribution is characterized by some parameters. For example, the proportion of success in any given trial is the only parameter characterizing a binomial distribution. Similarly, the Poisson distribution is characterized by a rate of occurrence. A normal distribution is characterized by two parameters: namely, its mean and standard deviation.

Once the values of the parameters are known, the distribution of the random variable is completely known and the chance (probability) of any event can be computed. The probabilities for a discrete random variable can be computed through the probability mass function, called the pmf. The pmf is defined at discrete points and gives the point mass—i.e., the probability that the random variable will take that particular value. Likewise, for a continuous random variable, we have the probability density function, called the pdf. The pdf is very much like density and needs to be integrated over a range to obtain the probability that the continuous random variable lies between certain values. Thus, if the pdf or pmf is known, the chances of the random variable taking certain set of values may be computed theoretically.

Concept of estimation [2*, c6s2, c7s1, c7s3]. The true values of the parameters of a distribution are usually unknown and need to be estimated from the sample observations. The estimates are functions of the sample values and are called statistics. For example, the sample mean is a statistic and may be used to estimate the population mean. Similarly, the rate of occurrence of defects estimated from the sample (rate of defects per line of code) is a statistic and serves as the estimate of the population rate of rate of defects per line of code. The statistic used to estimate some population parameter is often referred to as the estimator of the parameter.

A very important point to note is that the results of the estimators themselves are random. If we take a different sample, we are likely to get a different estimate of the population parameter. In the theory of estimation, we need to understand different properties of estimators—particularly, how much the estimates can vary across samples and how to choose between different alternative ways to obtain the estimates. For example, if we wish to estimate the mean of a population, we might use as our estimator a sample mean, a sample median, a sample mode, or the midrange of the sample. Each of these estimators has different statistical properties that may impact the standard error of the estimate.

Types of estimates [2*, c7s3, c8s1].There are two types of estimates: namely, point estimates and interval estimates. When we use the value of a statistic to estimate a population parameter, we get a point estimate. As the name indicates, a point estimate gives a point value of the parameter being estimated.

Although point estimates are often used, they leave room for many questions. For instance, we are not told anything about the possible size of error or statistical properties of the point estimate. Thus, we might need to supplement a point estimate with the sample size as well as the variance of the estimate. Alternately, we might use an interval estimate. An interval estimate is a random interval with the lower and upper limits of the interval being functions of the sample observations as well as the sample size. The limits are computed on the basis of some assumptions regarding the sampling distribution of the point estimate on which the limits are based.

Properties of estimators. Various statistical properties of estimators are used to decide about the appropriateness of an estimator in a given situation. The most important properties are that an estimator is unbiased, efficient, and consistent with respect to the population.

Tests of hypotheses [2*, c9s1].A hypothesis is a statement about the possible values of a parameter. For example, suppose it is claimed that a new method of software development reduces the occurrence of defects. In this case, the hypothesis is that the rate of occurrence of defects has reduced. In tests of hypotheses, we decide—on the basis of sample observations—whether a proposed hypothesis should be accepted or rejected.

For testing hypotheses, the null and alternative hypotheses are formed. The null hypothesis is the hypothesis of no change and is denoted as H0. The alternative hypothesis is written as H1. It is important to note that the alternative hypothesis may be one-sided or two-sided. For example, if we have the null hypothesis that the population mean is not less than some given value, the alternative hypothesis would be that it is less than that value and we would have a one-sided test. However, if we have the null hypothesis that the population mean is equal to some given value, the alternative hypothesis would be that it is not equal and we would have a two-sided test (because the true value could be either less than or greater than the given value).

In order to test some hypothesis, we first compute some statistic. Along with the computation of the statistic, a region is defined such that in case the computed value of the statistic falls in that region, the null hypothesis is rejected. This region is called the critical region (also known as the confidence interval). In tests of hypotheses, we need to accept or reject the null hypothesis on the basis of the evidence obtained. We note that, in general, the alternative hypothesis is the hypothesis of interest. If the computed value of the statistic does not fall inside the critical region, then we cannot reject the null hypothesis. This indicates that there is not enough evidence to believe that the alternative hypothesis is true. As the decision is being taken on the basis of sample observations, errors are possible; the types of such errors are summarized in the following table.

| Nature | Statistical Decision | |

|---|---|---|

| Accept H0 | Reject H0 | |

| H0 is true | OK | Type I error (probability = a) |

| H0 is false | Type II error (probability = b) | OK |

In test of hypotheses, we aim at maximizing the power of the test (the value of 1−b) while ensuring that the probability of a type I error (the value of a) is maintained within a particular value— typically 5 percent.

It is to be noted that construction of a test of hypothesis includes identifying statistic(s) to estimate the parameter(s) and defining a critical region such that if the computed value of the statistic falls in the critical region, the null hypothesis is rejected.

2.2 Concepts of Correlation and Regression

A major objective of many statistical investigations is to establish relationships that make it possible to predict one or more variables in terms of others. Although it is desirable to predict a quantity exactly in terms of another quantity, it is seldom possible and, in many cases, we have to be satisfied with estimating the average or expected values.

The relationship between two variables is studied using the methods of correlation and regression. Both these concepts are explained briefly in the following paragraphs.

Correlation. The strength of linear relationship between two variables is measured using the correlation coefficient. While computing the correlation coefficient between two variables, we assume that these variables measure two different attributes of the same entity. The correlation coefficient takes a value between –1 to +1. The values –1 and +1 indicate a situation when the association between the variables is perfect—i.e., given the value of one variable, the other can be estimated with no error. A positive correlation coefficient indicates a positive relationship—that is, if one variable increases, so does the other. On the other hand, when the variables are negatively correlated, an increase of one leads to a decrease of the other.

It is important to remember that correlation does not imply causation. Thus, if two variables are correlated, we cannot conclude that one causes the other.

Regression. The correlation analysis only measures the degree of relationship between two variables. The analysis to find the relationship between two variables is called regression analysis. The strength of the relationship between two variables is measured using the coefficient of determination. This is a value between 0 and 1. The closer the coefficient is to 1, the stronger the relationship between the variables. A value of 1 indicates a perfect relationship.

3 Measurement

Knowing what to measure and which measurement method to use is critical in engineering endeavors. It is important that everyone involved in an engineering project understand the measurement methods and the measurement results that will be used.

Measurements can be physical, environmental, economic, operational, or some other sort of measurement that is meaningful for the particular project. This section explores the theory of measurement and how it is fundamental to engineering. Measurement starts as a conceptualization then moves from abstract concepts to definitions of the measurement method to the actual application of that method to obtain a measurement result. Each of these steps must be understood, communicated, and properly employed in order to generate usable data. In traditional engineering, direct measures are often used. In software engineering, a combination of both direct and derived measures is necessary [6*, p273].

The theory of measurement states that measurement is an attempt to describe an underlying real empirical system. Measurement methods define activities that allocate a value or a symbol to an attribute of an entity.

Attributes must then be defined in terms of the operations used to identify and measure them— that is, the measurement methods. In this approach, a measurement method is defined to be a precisely specified operation that yields a number (called the measurement result) when measuring an attribute. It follows that, to be useful, the measurement method has to be well defined. Arbitrariness in the method will reflect itself in ambiguity in the measurement results.

In some cases—particularly in the physical world—the attributes that we wish to measure are easy to grasp; however, in an artificial world like software engineering, defining the attributes may not be that simple. For example, the attributes of height, weight, distance, etc. are easily and uniformly understood (though they may not be very easy to measure in all circumstances), whereas attributes such as software size or complexity require clear definitions.

Operational definitions. The definition of attributes, to start with, is often rather abstract. Such definitions do not facilitate measurements. For example, we may define a circle as a line forming a closed loop such that the distance between any point on this line and a fixed interior point called the center is constant. We may further say that the fixed distance from the center to any point on the closed loop gives the radius of the circle. It may be noted that though the concept has been defined, no means of measuring the radius has been proposed. The operational definition specifies the exact steps or method used to carry out a specific measurement. This can also be called the measurement method; sometimes a measurement procedure may be required to be even more precise.

The importance of operational definitions can hardly be overstated. Take the case of the apparently simple measurement of height of individuals. Unless we specify various factors like the time when the height will be measured (it is known that the height of individuals vary across various time points of the day), how the variability due to hair would be taken care of, whether the measurement will be with or without shoes, what kind of accuracy is expected (correct up to an inch, 1/2 inch, centimeter, etc.) — even this simple measurement will lead to substantial variation. Engineers must appreciate the need to define measures from an operational perspective.

3.1 Levels (Scales) of Measurement

Once the operational definitions are determined, the actual measurements need to be undertaken. It is to be noted that measurement may be carried out in four different scales: namely, nominal, ordinal, interval, and ratio. Brief descriptions of each are given below.

Nominal scale: This is the lowest level of measurement and represents the most unrestricted assignment of numerals. The numerals serve only as labels, and words or letters would serve as well. The nominal scale of measurement involves only classification and the observed sampling units are put into any one of the mutually exclusive and collectively exhaustive categories (classes). Some examples of nominal scales are:

- Job titles in a company

- The software development life cycle (SDLC) model (like waterfall, iterative, agile, etc.) followed by different software projects

In nominal scale, the names of the different categories are just labels and no relationship between them is assumed. The only operations that can be carried out on nominal scale is that of counting the number of occurrences in the different classes and determining if two occurrences have the same nominal value. However, statistical analyses may be carried out to understand how entities belonging to different classes perform with respect to some other response variable.

Ordinal scale: Refers to the measurement scale where the different values obtained through the process of measurement have an implicit ordering. The intervals between values are not specified and there is no objectively defined zero element. Typical examples of measurements in ordinal scales are:

- Skill levels (low, medium, high)

- Capability Maturity Model Integration (CMMI) maturity levels of software development organizations

- Level of adherence to process as measured in a 5-point scale of excellent, above average, average, below average, and poor, indicating

the range from total adherence to no adherence at all

Measurement in ordinal scale satisfies the transitivity property in the sense that if A > B and B > C, then A > C. However, arithmetic operations cannot be carried out on variables measured in ordinal scales. Thus, if we measure customer satisfaction on a 5-point ordinal scale of 5 implying a very high level of satisfaction and 1 implying a very high level of dissatisfaction, we cannot say that a score of four is twice as good as a score of two. So, it is better to use terminology such as excellent, above average, average, below average, and poor than ordinal numbers in order to avoid the error of treating an ordinal scale as a ratio scale. It is important to note that ordinal scale measures are commonly misused and such misuse can lead to erroneous conclusions [6*, p274]. A common misuse of ordinal scale measures is to present a mean and standard deviation for the data set, both of which are meaningless. However, we can find the median, as computation of the median involves counting only.

Interval scales: With the interval scale, we come to a form that is quantitative in the ordinary sense of the word. Almost all the usual statistical measures are applicable here, unless they require knowledge of a true' zero point. The zero point on an interval scale is a matter of convention. Ratios do not make sense, but the difference between levels of attributes can be computed and is meaningful. Some examples of interval scale of measurement follow:

- Measurement of temperature in different scales, such as Celsius and Fahrenheit. Suppose T1 and T2 are temperatures measured in some scale. We note that the fact that T1 is twice T2 does not mean that one object is twice as hot as another. We also note that the zero points are arbitrary.

- Calendar dates. While the difference between dates to measure the time elapsed is a meaningful concept, the ratio does not make sense.

- Many psychological measurements aspire to create interval scales. Intelligence is often measured in interval scale, as it is not necessary to define what zero intelligence would mean.

If a variable is measured in interval scale, most of the usual statistical analyses like mean, standard deviation, correlation, and regression may be carried out on the measured values.

Ratio scale: These are quite commonly encountered in physical science. These scales of measures are characterized by the fact that operations exist for determining all 4 relations: equality, rank order, equality of intervals, and equality of ratios. Once such a scale is available, its numerical values can be transformed from one unit to another by just multiplying by a constant, e.g., conversion of inches to feet or centimeters. When measurements are being made in ratio scale, existence of a nonarbitrary zero is mandatory. All statistical measures are applicable to ratio scale; logarithm usage is valid only when these scales are used, as in the case of decibels. Some examples of ratio measures are

- the number of statements in a software program

- temperature measured in the Kelvin (K) scale or in Fahrenheit (F).

An additional measurement scale, the absolute scale, is a ratio scale with uniqueness of the measure; i.e., a measure for which no transformation is possible (for example, the number of programmers working on a project).